Showing

- contributions/sdds-deep/SDDS-DEEP.tex 1 addition, 2 deletionscontributions/sdds-deep/SDDS-DEEP.tex

- contributions/sdds-xdc/SDDS-XDC.tex 1 addition, 1 deletioncontributions/sdds-xdc/SDDS-XDC.tex

- contributions/storage/danni.PNG 0 additions, 0 deletionscontributions/storage/danni.PNG

- contributions/storage/storage.tex 49 additions, 48 deletionscontributions/storage/storage.tex

- contributions/summerstudent/.gitkeep 0 additions, 0 deletionscontributions/summerstudent/.gitkeep

- contributions/summerstudent/MLalgorithms.png 0 additions, 0 deletionscontributions/summerstudent/MLalgorithms.png

- contributions/summerstudent/StoRM-full-picture.png 0 additions, 0 deletionscontributions/summerstudent/StoRM-full-picture.png

- contributions/summerstudent/StoRM.png 0 additions, 0 deletionscontributions/summerstudent/StoRM.png

- contributions/summerstudent/kibana.png 0 additions, 0 deletionscontributions/summerstudent/kibana.png

- contributions/summerstudent/summerstudent.tex 90 additions, 0 deletionscontributions/summerstudent/summerstudent.tex

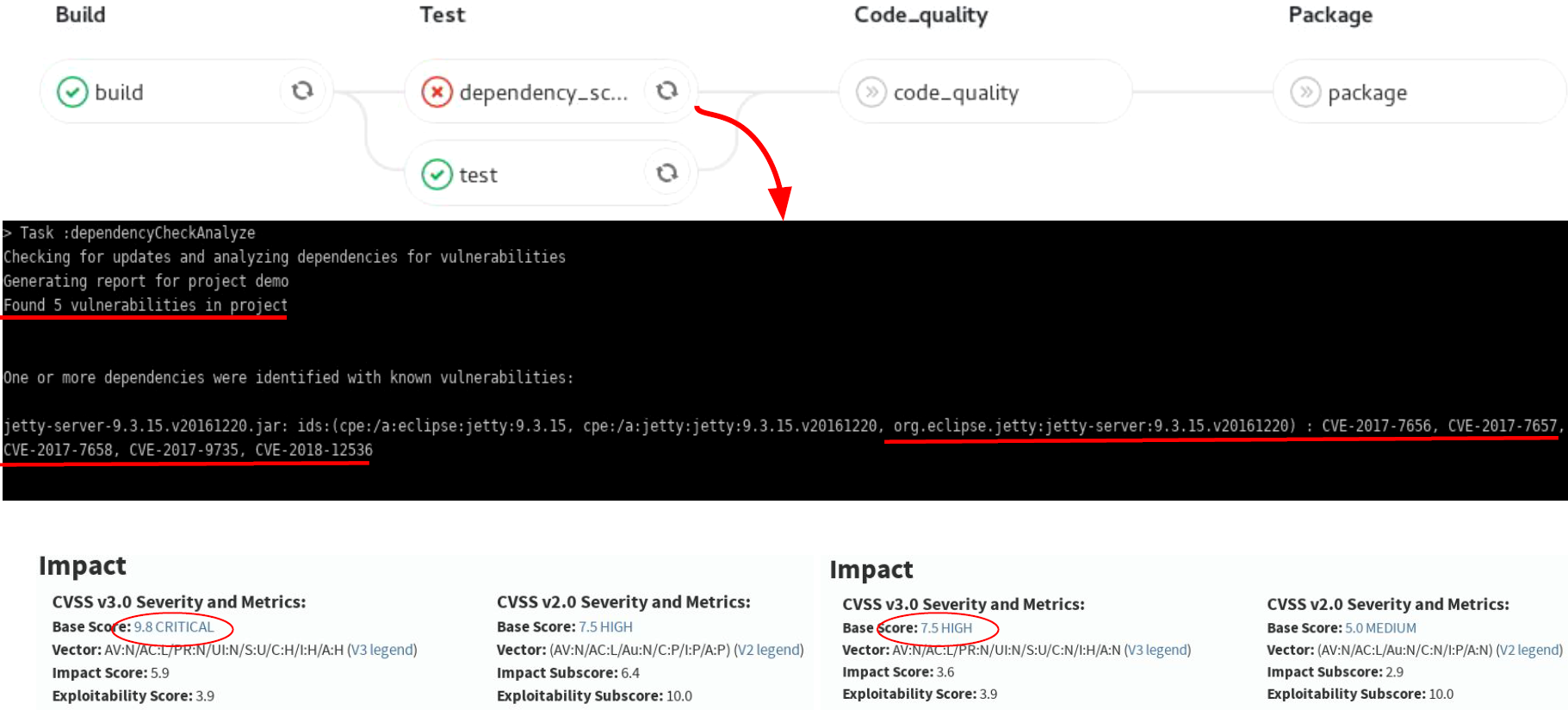

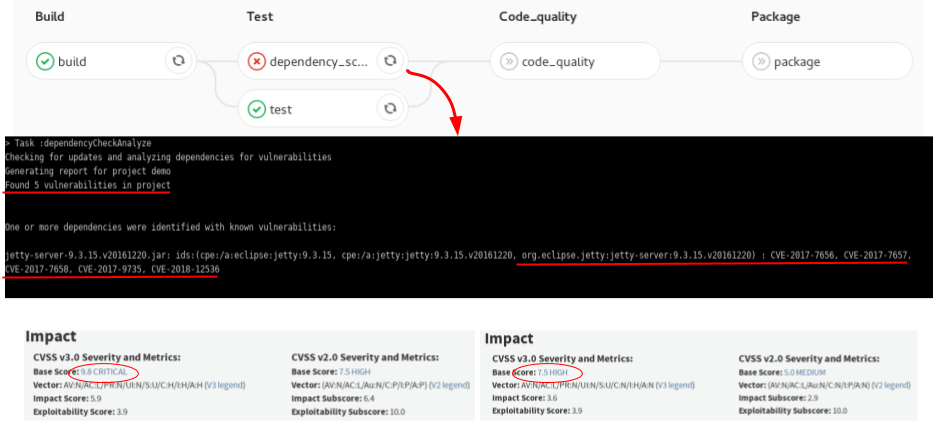

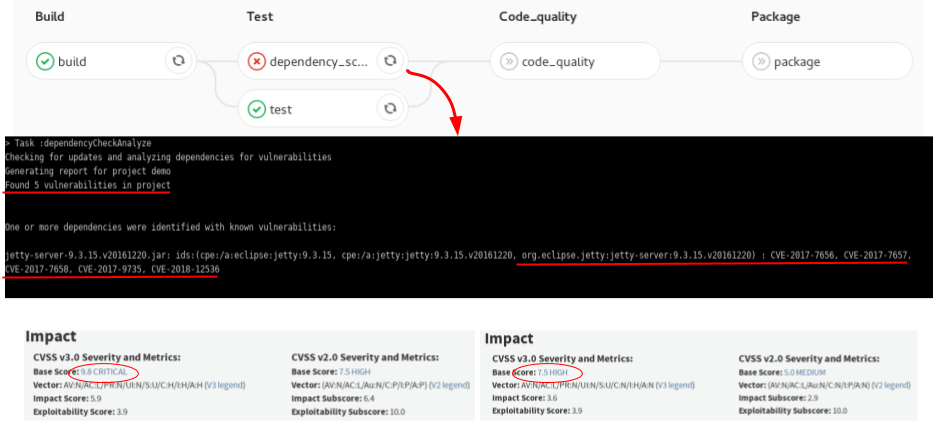

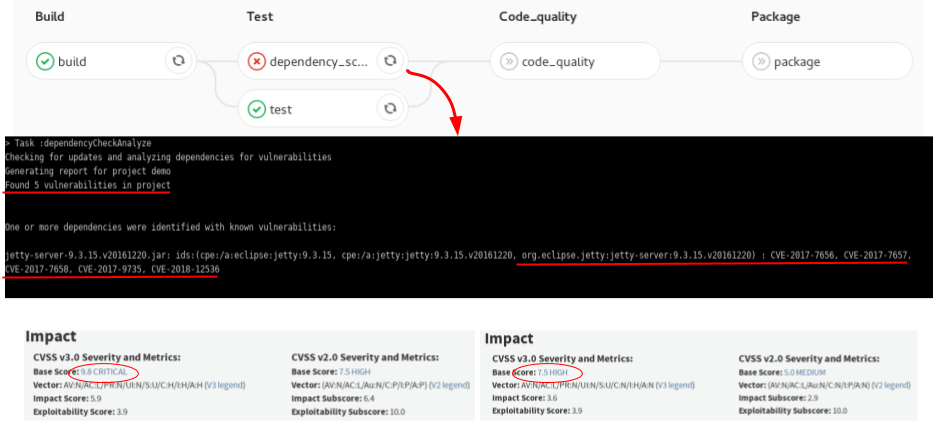

- contributions/sysinfo/deps_scan.png 0 additions, 0 deletionscontributions/sysinfo/deps_scan.png

- contributions/sysinfo/sysinfo.tex 66 additions, 40 deletionscontributions/sysinfo/sysinfo.tex

- contributions/tier1/pledge.png 0 additions, 0 deletionscontributions/tier1/pledge.png

- contributions/tier1/tier1.tex 30 additions, 36 deletionscontributions/tier1/tier1.tex

- contributions/transfer/transfer.pdf 0 additions, 0 deletionscontributions/transfer/transfer.pdf

- contributions/user-support/main.tex 11 additions, 10 deletionscontributions/user-support/main.tex

- contributions/virgo/AdV_computing_CNAF.tex 20 additions, 20 deletionscontributions/virgo/AdV_computing_CNAF.tex

- contributions/xenon/main.tex 2 additions, 2 deletionscontributions/xenon/main.tex

- immagini/.gitkeep 0 additions, 0 deletionsimmagini/.gitkeep

- immagini/Additional-Information_18_web.jpg 0 additions, 0 deletionsimmagini/Additional-Information_18_web.jpg

contributions/storage/danni.PNG

0 → 100644

1.52 MiB

contributions/summerstudent/.gitkeep

0 → 100644

contributions/summerstudent/MLalgorithms.png

0 → 100644

25.4 KiB

381 KiB

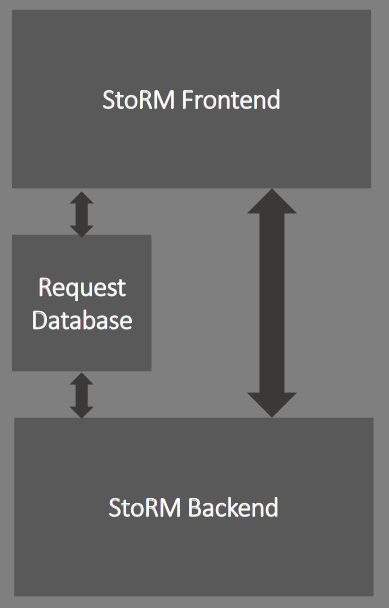

contributions/summerstudent/StoRM.png

0 → 100644

17 KiB

contributions/summerstudent/kibana.png

0 → 100644

388 KiB

| W: | H:

| W: | H:

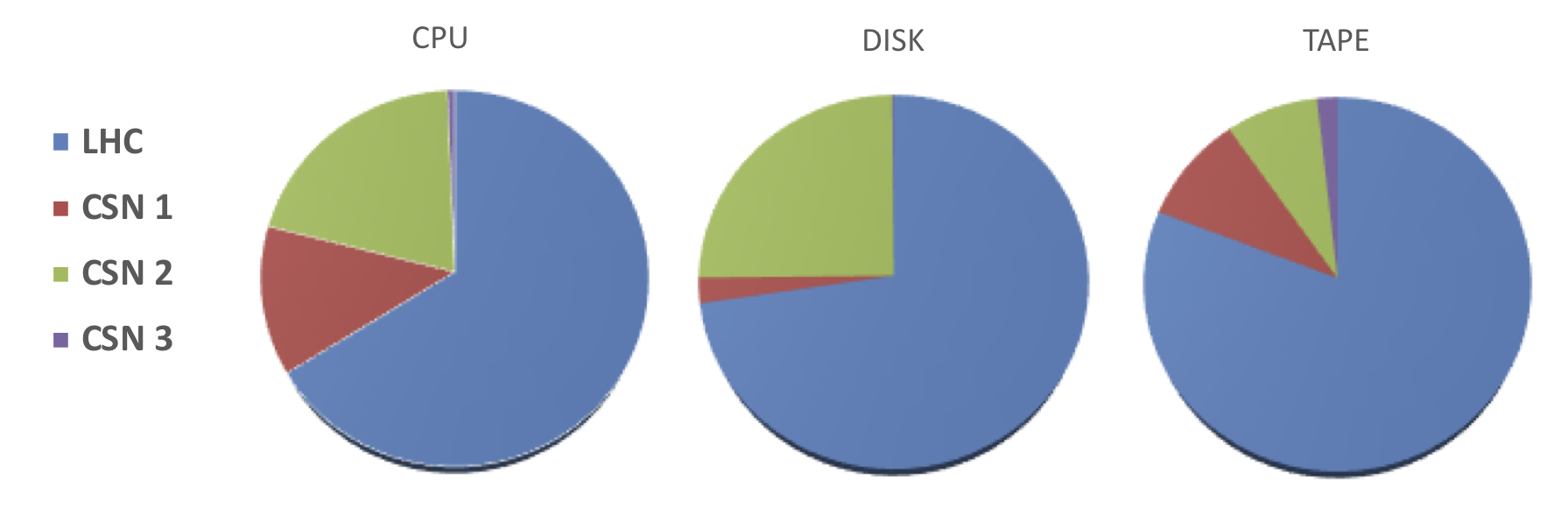

contributions/tier1/pledge.png

0 → 100644

180 KiB

contributions/transfer/transfer.pdf

0 → 100644

File added

immagini/.gitkeep

0 → 100644

immagini/Additional-Information_18_web.jpg

0 → 100644

752 KiB